Lab 8: Support Vector Machines

From 6.034 Wiki

(→Neural Nets: delete section) |

(→Neural Nets: delete section) |

||

| Line 118: | Line 118: | ||

= API = | = API = | ||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

| - | |||

== Support Vector Machines == | == Support Vector Machines == | ||

Revision as of 23:24, 12 October 2016

Contents |

This lab is due by [todo] at 10:00pm.

To work on this lab, you will need to get the code: [todo]

Your answers for this lab belong in the main file lab7.py.

Problems

This lab is divided into two independent parts. In the first part, you'll code subroutines necessary for training and using neural networks. In the second part, you'll code subroutines for using and validating support vector machines.

Support Vector Machines

Vector Math

We'll start with some basic functions for manipulating vectors. For now, we will represent an n-dimensional vector as a list or tuple of n coordinates. dot_product should compute the dot product of two vectors, while norm computes the length of a vector. (There is a simple implementation of norm that uses dot_product.) Implement both functions:

def dot_product(u, v):

def norm(v):

Later, when you need to actually manipulate vectors, note that we have also provided methods for adding two vectors (vector_add) and for multiplying a scalar by a vector (scalar_mult). (See the API, below.)

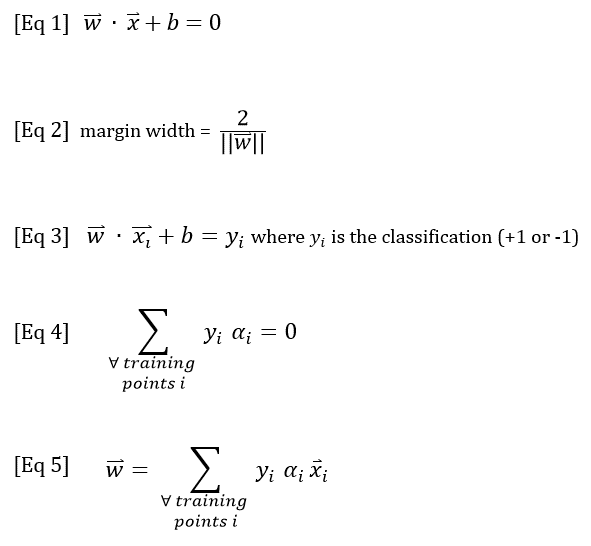

SVM Equations (reference only)

Next, we will use the following five SVM equations. Recall from recitation that Equations 1-3 define the decision boundary and the margin width, while Equations 4 & 5 can be used to calculate the alpha (supportiveness) values for the training points.

For more information about how to apply these equations, see:

- Dylan's guide to solving SVM quiz problems

- Robert McIntyre's SVM notes

- SVM notes, from the Reference material and playlist

Using the SVM Boundary Equations

We will start by using Equation 1 to classify a point with a given SVM, ignoring the point's actual classification (if any). According to Equation 1, a point's classification is +1 when w·x + b > 0, or -1 when w·x + b < 0. If w·x + b = 0, the point lies on the decision boundary, so its classification is ambiguous.

First, evaluate the expression w·x + b to calculate how positive a point is. svm is a SupportVectorMachine object, and point is a Point (see the API below for details).

def positiveness(svm, point):

Next, classify a point as +1 or -1. If the point lies on the boundary, return 0.

def classify(svm, point):

Then, use the SVM's current decision boundary to calculate its margin width (Equation 2):

def margin_width(svm):

Finally, we will check that the gutter constraint is satisfied. The gutter constraint requires that positiveness be +1 for positive support vectors, and -1 for negative support vectors. Our function will also check that no training points lie between the gutters -- that is, every training point should have a positiveness value indicating that it either lies on a gutter or outside the margin. (Note that the gutter constraint does not check whether points are classified correctly.) Implement check_gutter_constraint, which should return a set (not a list) of the training points that violate one or both conditions:

def check_gutter_constraint(svm):

Using the Supportiveness Equations

To train a support vector machine, every training point is assigned a supportiveness value (also known as an alpha value, or a Lagrange multiplier), representing how important the point is in determining (or "supporting") the decision boundary. The supportiveness values must satisfy a number of conditions, which we will check below.

First, implement check_alpha_signs to check each point's supportiveness value. Each training point should have a non-negative supportiveness. Specifically, all support vectors should have positive supportiveness, while all non-support vectors should have a supportiveness of 0. This function, like check_gutter_constraint above, should return a set of the training points that violate either of the supportiveness conditions.

def check_alpha_signs(svm):

Implement check_alpha_equations to check that the SVM's supportiveness values are consistent with its boundary equation and the classifications of its training points. Return True if both Equations 4 and 5 are satisfied, otherwise False.

def check_alpha_equations(svm):

Classification Accuracy

Once a support vector machine has been trained -- or even while it is being trained -- we want to know how well it has classified the training data. Write a function that checks whether the training points were classified correctly and returns a set containing the training points that were misclassified, if any.

def misclassified_training_points(svm):

Extra Credit Project: Train a support vector machine

In class and in this lab, we have seen how to calculate the final parameters of an SVM (given the decision boundary), and we've used the equations to assess how well an SVM has been trained, but we haven't actually attempted to train an SVM. In practice, training an SVM is a hill-climbing problem in alpha-space using the Lagrangian. There's a bit of math involved. The following resources may be helpful:

- SVM lecture notes from a machine-learning class at NYU

- paper on Sequential Minimal Optimization

- SVM notes, from the Reference material and playlist

- SVM slides, from the Reference material and playlist

- Wikipedia articles on SMV math and Sequential Minimal Optimization

For some unspecified amount of extra credit, extend your code and/or the API to train a support vector machine. Possible extensions include implementing kernel functions or writing code to graphically display your training data and SVM boundary. If you can come up with a reasonably simple procedure for training an SVM, preferably using only built-in Python packages, we may even use your code in a future 6.034 lab! (With your permission, of course.)

To receive your extra credit, send your code to 6.034-2015-support@mit.edu by December 4, 2015, ideally with some sort of documentation. (Briefly explain what you did and how you did it.)

Survey

Please answer these questions at the bottom of your lab6.py file:

- NAME: What is your name? (string)

- COLLABORATORS: Other than 6.034 staff, whom did you work with on this lab? (string, or empty string if you worked alone)

- HOW_MANY_HOURS_THIS_LAB_TOOK: Approximately how many hours did you spend on this lab? (number or string)

- WHAT_I_FOUND_INTERESTING: Which parts of this lab, if any, did you find interesting? (string)

- WHAT_I_FOUND_BORING: Which parts of this lab, if any, did you find boring or tedious? (string)

- (optional) SUGGESTIONS: What specific changes would you recommend, if any, to improve this lab for future years? (string)

(We'd ask which parts you find confusing, but if you're confused you should really ask a TA.)

When you're done, run the online tester to submit your code.

API

Support Vector Machines

The file svm_api.py defines the Point, DecisionBoundary, and SupportVectorMachine classes, as well as some helper functions for vector math, all described below.

Point

A Point has the following attributes:

- point.name, the name of the point (a string).

- point.coords, the coordinates of the point, represented as a vector (a tuple or list of numbers).

- point.classification, the classification of the point, if known. Note that classification (if any) is a number, typically +1 or -1.

- point.alpha, the supportiveness (alpha) value of the point, if assigned.

DecisionBoundary

A DecisionBoundary is defined by two parameters: a normal vector w, and an offset b. w is represented as a vector: a list or tuple of coordinates. You can access these parameters using the attributes .w and .b.

SupportVectorMachine

A SupportVectorMachine is a classifier that uses a DecisionBoundary to classify points. It has a list of training points and optionally a list of support vectors. You can access these parameters using these attributes:

- svm.boundary, the SVM's DecisionBoundary.

- svm.training_points, a list of Point objects with known classifications.

- svm.support_vectors, a list of Point objects that serve as support vectors for the SVM. Every support vector is also a training point.

Helper functions for vector math

vector_add: Given two vectors represented as lists or tuples of coordinates, returns their sum as a list of coordinates.

scalar_mult: Given a constant scalar and a vector (as a tuple or list of coordinates), returns a scaled list of coordinates.